What is Algorithmic Bias in Hiring?

Understanding AI Decision-Making in Recruitment

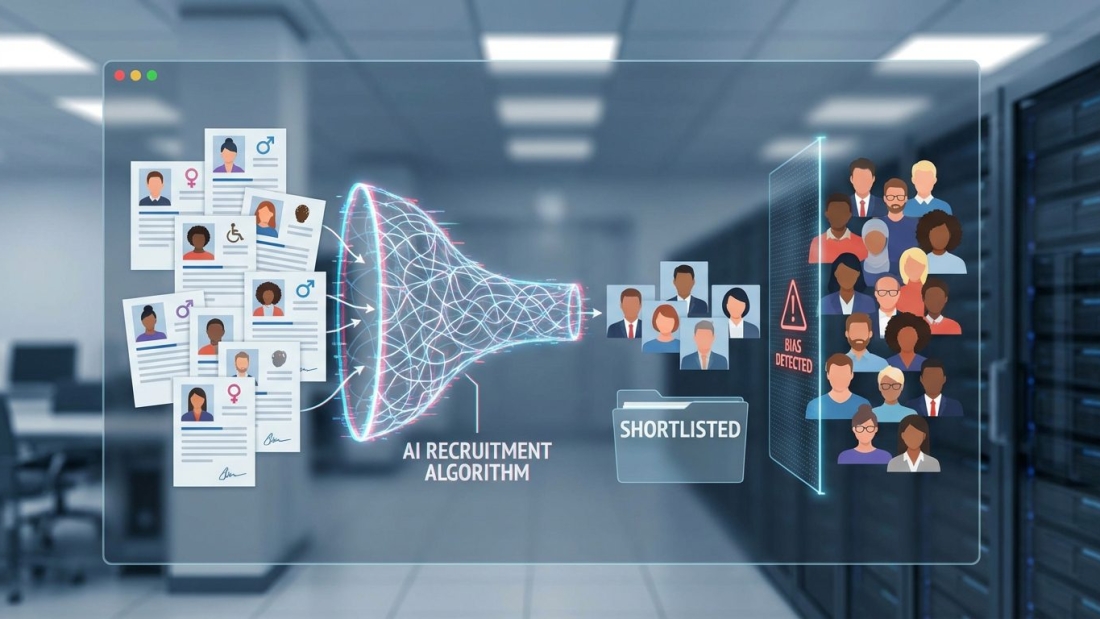

Let’s be honest—hiring has never been perfectly fair. Human recruiters bring personal experiences, unconscious preferences, and sometimes even fatigue into their decisions. That is exactly why many organizations turned toward artificial intelligence in recruitment. The promise sounded simple: remove human bias and let machines make objective decisions. But reality has turned out to be far more complicated.

AI systems used in hiring do not think independently. They learn patterns from past data. If historical hiring decisions favored certain demographics, the algorithm picks up on those patterns and treats them as successful benchmarks. As a result, the system does not remove bias; it quietly learns and repeats it. Today, a large percentage of companies rely on AI tools to screen resumes, shortlist candidates, and even conduct initial interviews, which means these automated decisions impact millions of job seekers.

Think of AI like a mirror reflecting past hiring behavior. If the past was unfair, the reflection will be too. The challenge is that AI often appears neutral, making it harder to spot bias. Unlike a human recruiter, it does not openly express preferences. Instead, it embeds them deep within its logic. That makes algorithmic bias more subtle and, in many ways, more dangerous because it operates at scale without obvious warning signs.

How Bias Gets Embedded in Algorithms

Bias in AI systems is not accidental; it is usually the result of multiple hidden factors working together. One of the main sources is training data. If the dataset used to train the algorithm contains biased hiring outcomes, the AI will consider those outcomes as desirable patterns. For example, if a company historically hired more candidates from certain schools or backgrounds, the algorithm may prioritize similar profiles in future decisions.

Another factor is feature selection. Developers decide what data points the AI should consider, such as education, work experience, or even gaps in employment. These choices may unintentionally disadvantage certain groups. For instance, career breaks might negatively impact candidates who took time off for caregiving responsibilities, which often affects women more than men.

There is also the issue of proxy variables. Even when sensitive attributes like race or gender are removed, the algorithm may still infer them indirectly through other data points such as names, locations, or language patterns. This creates a situation where bias persists even when organizations believe they have eliminated it. Over time, these subtle biases compound, shaping hiring decisions in ways that are difficult to detect but deeply impactful.

What Does “Intersectional Bias” Really Mean?

The Concept of Intersectionality

To fully understand bias in AI recruitment, it is important to go beyond single categories like gender or race. Real people are defined by multiple identities that interact with each other. Intersectionality refers to the way these identities overlap and create unique experiences of advantage or disadvantage.

For example, the experience of a woman in the workplace is not identical to that of a man. But the experience of a woman from a minority ethnic background is also not identical to that of a woman from a majority group. These overlapping identities create complex layers of bias that cannot be understood by looking at one factor in isolation.

AI systems often struggle with this complexity because they are designed to identify patterns in structured data. When multiple variables interact in nuanced ways, the system may fail to capture those interactions accurately. As a result, certain groups may be disproportionately disadvantaged, even if the algorithm appears fair when evaluated on individual dimensions.

Why Single-Dimension Bias Analysis Falls Short

Many organizations attempt to address bias by analyzing outcomes across single dimensions. They might compare hiring rates between men and women or between different racial groups. While this approach provides useful insights, it does not capture the full picture.

Intersectional bias hides within combinations of identities. A system might show equal outcomes for men and women overall, but still disadvantage women from specific racial or socioeconomic backgrounds. These disparities remain invisible when analysis is limited to one variable at a time.

This limitation creates a false sense of fairness. Companies may believe their systems are unbiased because they pass basic checks, while deeper inequalities continue to exist. Addressing intersectional bias requires more advanced analysis that considers multiple variables simultaneously. Without this level of scrutiny, even well-intentioned AI systems can perpetuate hidden forms of discrimination.

The Rise of AI in Recruitment

Adoption Trends and Statistics

The adoption of AI in recruitment has grown rapidly over the past decade. Organizations are increasingly relying on automated systems to handle tasks that were once performed by human recruiters. These tasks include resume screening, candidate ranking, and even initial interviews.

One of the main reasons for this shift is efficiency. AI can process thousands of applications in a fraction of the time it would take a human recruiter. This speed allows companies to reduce hiring costs and accelerate decision-making. In highly competitive job markets, this advantage can be significant.

However, the widespread use of AI also raises important concerns. As more organizations adopt these tools, the potential impact of bias increases. A single flawed algorithm can influence hiring decisions across multiple roles, departments, and even geographic regions. This scale amplifies both the benefits and the risks of AI in recruitment.

Key AI Tools Used in Hiring

AI recruitment tools come in various forms, each designed to optimize a specific part of the hiring process. Resume screening tools analyze keywords and qualifications to shortlist candidates. Video interview platforms use machine learning to evaluate facial expressions, tone of voice, and communication style. Predictive analytics tools assess the likelihood of a candidate’s success based on historical data.

While these tools offer significant advantages, they also introduce new challenges. For example, video analysis systems may struggle with cultural differences in communication styles. Candidates who do not conform to expected norms may be unfairly evaluated. Similarly, resume screening tools may favor candidates who use specific keywords, regardless of their actual skills or potential.

These limitations highlight the importance of understanding how AI tools work and what assumptions they make. Without careful oversight, organizations risk relying on systems that prioritize efficiency over fairness.

Real-World Evidence of Bias in AI Hiring

Gender and Racial Bias in Resume Screening

Research has consistently shown that AI recruitment systems can exhibit measurable bias. In some cases, algorithms have been found to favor candidates from certain demographic groups while disadvantaging others with similar qualifications. These patterns often reflect historical hiring practices embedded in the training data.

Gender bias is one of the most commonly observed issues. Some systems have shown a tendency to favor male candidates for technical roles, while others may favor female candidates in different contexts. These inconsistencies suggest that AI does not eliminate bias but rather redistributes it based on learned patterns.

Racial bias is another significant concern. Algorithms may associate certain names, locations, or educational backgrounds with higher or lower suitability for a role. These associations can lead to unequal opportunities for candidates from different racial or ethnic groups, even when their qualifications are identical.

Intersectional Disadvantages Across Groups

When multiple forms of bias intersect, the impact becomes even more pronounced. Candidates who belong to more than one marginalized group often face greater challenges in AI-driven hiring processes. For example, a candidate who is both a woman and from a minority background may experience disadvantages that are not captured by analyzing gender or race alone.

These intersectional disadvantages are particularly concerning because they are often overlooked. Standard evaluation methods may fail to detect them, allowing biased systems to operate unchecked. As a result, certain groups may be consistently excluded from opportunities without any clear explanation.

Addressing these issues requires a deeper understanding of how different forms of bias interact. It also requires a commitment to designing AI systems that account for this complexity rather than ignoring it.

Hidden Bias: The Problem of “Unknown” Data

Missing Demographics and Silent Exclusion

One of the less obvious challenges in AI recruitment is the presence of incomplete or missing data. Not all candidates provide the same level of information, and some data points may be unavailable or difficult to classify. This creates gaps in the dataset that can affect how the algorithm makes decisions.

When demographic information is missing, it becomes harder to evaluate fairness. Organizations may not be able to determine whether certain groups are being disadvantaged because they lack the necessary data. This creates a situation where bias can exist without being detected.

In some cases, candidates with incomplete data may be excluded from consideration altogether. This disproportionately affects individuals with non-traditional career paths, gaps in employment, or unconventional educational backgrounds. As a result, the system may favor candidates who fit a more standardized profile, reinforcing existing inequalities.

Why Intersectional Bias is More Dangerous

Compounding Disadvantages

Intersectional bias is particularly harmful because it compounds disadvantages across multiple dimensions. Instead of facing a single barrier, affected individuals encounter multiple overlapping obstacles. These barriers can reinforce each other, making it significantly harder to achieve fair outcomes.

For example, a candidate who faces both gender and racial bias may experience a level of disadvantage that is greater than the sum of its parts. This compounding effect makes it more difficult to identify and address the underlying issues.

Amplification at Scale

AI systems operate at a scale that magnifies their impact. A small bias in the data can lead to significant disparities when applied across thousands or millions of decisions. Over time, these disparities can shape entire industries and labor markets.

This amplification effect makes it essential to address bias at its source. Once an algorithm is deployed, its influence can spread بسرعة and become deeply embedded in organizational processes. Correcting these issues after the fact can be challenging and costly.

Causes of Intersectional Algorithmic Bias

Biased Training Data

The quality of training data plays a critical role in determining the fairness of an AI system. If the data reflects historical inequalities, the algorithm will likely reproduce those patterns. Ensuring diverse and representative datasets is essential for reducing bias.

Flawed Model Design

The design of the algorithm also matters. Decisions about which variables to include, how to weight them, and how to evaluate outcomes can all influence the system’s behavior. Poor design choices can introduce bias even when the data itself is relatively balanced.

Lack of Diverse Development Teams

Diversity within development teams is another important factor. Teams that lack diverse perspectives may overlook potential sources of bias. Including individuals from different backgrounds can help identify and address these issues during the design process.

The Legal and Ethical Landscape

Regulations and Compliance

Governments and regulatory bodies are beginning to address the challenges posed by AI in recruitment. New laws and guidelines aim to ensure transparency, accountability, and fairness in automated decision-making. These regulations often require organizations to conduct bias audits and provide explanations for their decisions.

Ethical Concerns in AI Hiring

Beyond legal requirements, there are broader ethical considerations. Organizations must consider whether it is appropriate to rely on algorithms for decisions that have a significant impact on people’s lives. Ensuring fairness, transparency, and accountability is not just a legal obligation but a moral one.

Can AI Reduce Bias Instead of Increasing It?

When AI Works Fairly

Despite the challenges, AI has the potential to reduce bias when designed and implemented correctly. By standardizing evaluation criteria and removing subjective judgments, AI can create more consistent hiring processes. In some cases, organizations have reported improvements in diversity and fairness after adopting well-designed AI systems.

Best Practices for Ethical AI Recruitment

To achieve these benefits, organizations must follow best practices such as using diverse datasets, conducting regular audits, and maintaining human oversight. Transparency is also key. Candidates should understand how decisions are made and have the opportunity to challenge them if necessary.

The Future of Fair AI Hiring

Emerging Solutions and Technologies

New approaches to AI development are focusing on fairness and accountability. Techniques such as explainable AI and fairness-aware algorithms aim to make decision-making processes more transparent and equitable. These innovations offer promising pathways for reducing bias in recruitment.

What Organizations Must Do Next

Organizations must take a proactive approach to addressing intersectional bias. This includes investing in better data, improving model design, and fostering diverse teams. It also requires a commitment to continuous improvement, as new challenges and opportunities emerge.

Conclusion

Intersectional algorithmic bias in AI recruitment is a complex and evolving challenge. It reflects deeper issues within both technology and society. As organizations continue to adopt AI-driven hiring tools, the importance of fairness and accountability cannot be overstated. Addressing these issues requires a combination of technical expertise, ethical awareness, and ongoing vigilance.