Understanding the EU AI Act

What is the EU AI Act?

Artificial intelligence is no longer a futuristic concept. It is already shaping hiring decisions, financial approvals, healthcare diagnostics, and even public opinion. With this level of influence, the need for structured regulation becomes obvious. The EU AI Act is the first major legal framework designed to regulate artificial intelligence systems comprehensively, ensuring that innovation does not come at the cost of human rights and safety.

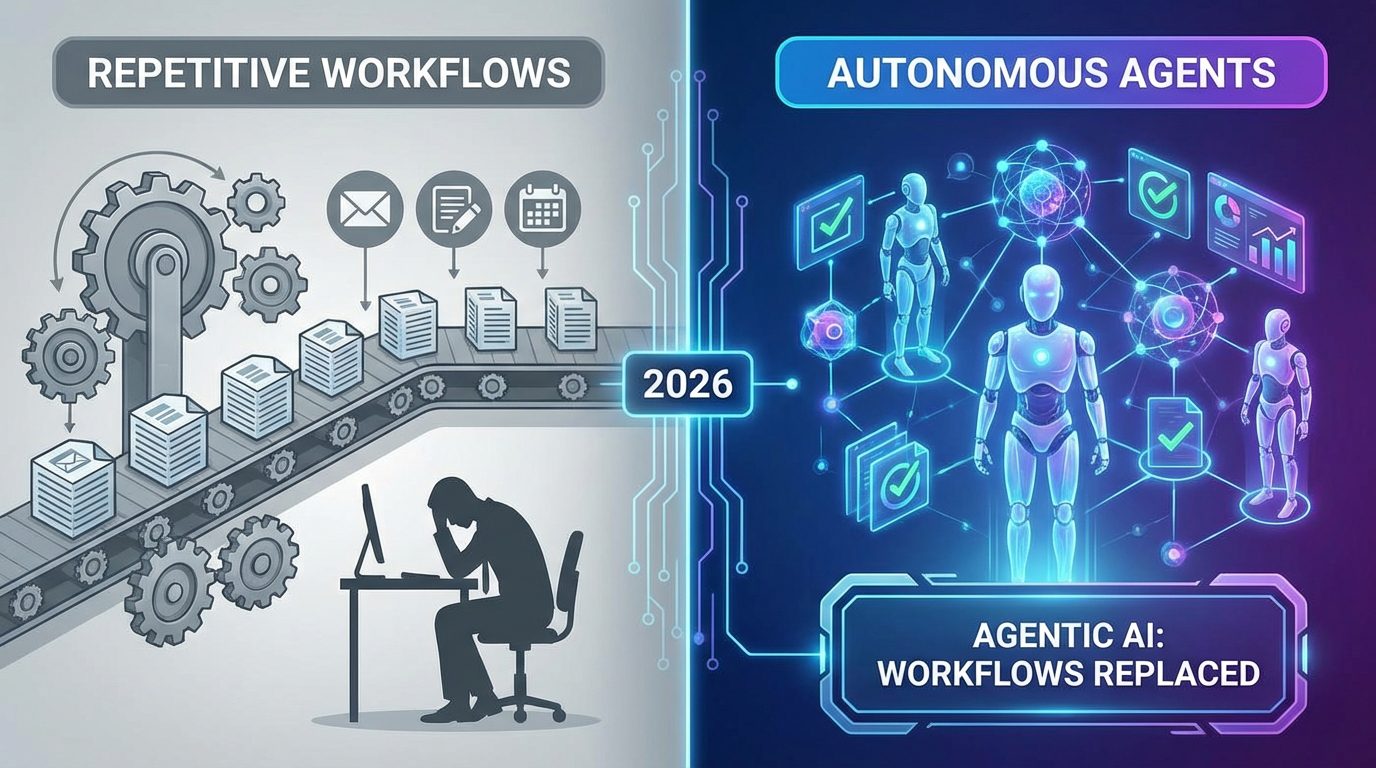

The law officially entered into force in 2024 and is expected to be fully enforced by 2026. Unlike traditional regulations that apply only within a region, this Act has a much broader scope. If your business develops or uses AI systems that interact with individuals or markets in the European Union, you are required to comply. This global reach makes the EU AI Act one of the most influential regulatory frameworks in modern technology.

The Act introduces a structured approach that categorizes AI systems based on their risk level. Instead of applying the same rules to all technologies, it differentiates between low-risk tools and high-impact systems. This allows businesses to innovate while maintaining accountability. In simple terms, the more risk your AI poses, the stricter the rules you must follow.

Why the EU Created the AI Act

The rise of AI has brought both incredible opportunities and serious concerns. Systems have been shown to produce biased hiring decisions, manipulate public opinion through deepfakes, and make critical decisions without transparency. These risks pushed the European Union to act before the situation escalated further.

The primary goal of the AI Act is to protect individuals while encouraging responsible innovation. It aims to ensure that AI systems are transparent, fair, and accountable. The focus is not on limiting progress but on guiding it in a direction that benefits society as a whole.

Another important reason behind this regulation is global leadership. By introducing strict and clear standards early, the EU positions itself as a leader in ethical AI development. Just as data privacy laws influenced global practices, this Act is expected to shape how AI systems are built and deployed worldwide.

Timeline and Implementation

Key Dates Businesses Must Know

Understanding the timeline of the EU AI Act is critical for businesses planning their compliance strategy. The law follows a phased rollout, giving companies time to adjust while gradually introducing stricter requirements.

- The Act entered into force in August 2024

- Certain prohibited practices become illegal by early 2025

- Rules for general-purpose AI systems are introduced in 2025

- Full enforcement begins in 2026

- High-risk systems face complete regulatory requirements by 2027

These milestones are not just deadlines; they represent stages of transformation. Businesses need to align their operations, technology, and governance structures with each phase.

Phased Rollout Explained

The phased implementation is designed to balance urgency with practicality. Early stages focus on banning harmful practices and increasing awareness, while later stages introduce detailed compliance requirements for more complex systems.

This approach allows businesses to adapt gradually rather than facing immediate, overwhelming changes. However, the time window should not be seen as an excuse for delay. Companies that start preparing early will have a significant advantage, both in compliance and in building trust with their users.

Risk-Based Approach Explained

The Four Risk Categories

One of the most important aspects of the EU AI Act is its risk-based classification system. This system divides AI technologies into four categories based on their potential impact.

| Risk Level | Description | Regulation Level |

|---|---|---|

| Unacceptable | Harmful or manipulative AI | Completely banned |

| High Risk | Systems affecting safety or rights | Strict compliance |

| Limited Risk | Moderate impact tools | Transparency rules |

| Minimal Risk | Low-impact applications | Minimal regulation |

This framework ensures that regulation is proportional. It prevents overregulation of simple tools while maintaining strict control over systems that can significantly affect people’s lives.

Examples of Each Risk Level

To understand this better, consider real-world scenarios. A social scoring system that ranks citizens based on behavior would fall under unacceptable risk and is banned. AI used in recruitment or credit decisions is considered high risk and must meet strict requirements. Chatbots that interact with customers fall into limited risk and require transparency. Meanwhile, simple recommendation engines used in entertainment platforms are classified as minimal risk.

This structured approach gives businesses clarity. Instead of guessing their obligations, they can easily identify where their systems stand and what actions are required.

Prohibited AI Practices

What AI Uses Are Banned

Some AI applications are considered too dangerous to be allowed under any circumstances. The EU AI Act clearly defines these prohibited practices to prevent misuse of technology.

Banned uses include systems that manipulate human behavior in harmful ways, social scoring mechanisms that evaluate individuals based on personal data, and certain types of biometric surveillance in public spaces. These restrictions are designed to protect fundamental rights and prevent abuse.

High-Risk AI Systems

Compliance Requirements

High-risk AI systems are subject to the strictest regulations under the Act. These systems have the potential to significantly impact individuals’ lives, which is why they must meet detailed compliance requirements.

Organizations must implement risk management systems to identify and mitigate potential issues. They need to use high-quality datasets to reduce bias and ensure fairness. Documentation must be thorough, covering every aspect of the system’s design and operation. Human oversight is also essential, ensuring that decisions are not left entirely to machines.

Security is another critical requirement. High-risk systems must be resilient against cyber threats and capable of maintaining reliability under different conditions. These measures ensure that AI systems operate safely and predictably.

Industries Affected Most

Several industries are heavily impacted by these regulations. Healthcare systems using AI for diagnosis, financial institutions relying on algorithms for credit decisions, and companies using AI for recruitment all fall into the high-risk category.

In these sectors, the consequences of errors can be severe, making compliance even more important. Businesses operating in these areas must prioritize regulatory readiness as part of their overall strategy.

Transparency and Accountability

Disclosure Obligations

Transparency is a key principle of the EU AI Act. Users have the right to know when they are interacting with AI systems. This requirement applies to chatbots, automated decision-making tools, and even synthetic media such as deepfakes.

Businesses must clearly disclose the use of AI and provide understandable information about how these systems operate. This is not just about legal compliance; it is about building trust. When users understand how AI works, they are more likely to accept and rely on it.

Accountability also plays a crucial role. Organizations must take responsibility for their AI systems, ensuring they operate within ethical and legal boundaries. This shift encourages companies to prioritize long-term trust over short-term gains.

Penalties and Enforcement

Fines and Business Risks

The penalties for non-compliance under the EU AI Act are significant. Fines can reach up to 35 million euros or a substantial percentage of global annual turnover, depending on the severity of the violation.

However, financial penalties are not the only risk. Authorities have the power to remove non-compliant AI systems from the market. This can disrupt operations, damage reputations, and result in lost revenue.

For businesses, the message is clear. Compliance is not optional. It is a critical component of risk management and long-term success.

Global Reach of the EU AI Act

Why Non-EU Companies Must Care

The EU AI Act has a global impact because of its extraterritorial scope. It applies not only to companies based in the European Union but also to those whose AI systems affect individuals within the region.

This means that businesses in the GCC, Asia, and other parts of the world must comply if they want to operate in the European market. The Act effectively sets a global standard for AI regulation.

Ignoring these requirements can result in restricted access to one of the world’s largest markets. On the other hand, compliance can open doors to international opportunities and partnerships.

Impact on GCC Businesses

Regulatory Alignment in the Gulf

GCC countries are rapidly advancing in artificial intelligence, investing heavily in innovation and digital transformation. Aligning with the EU AI Act can strengthen their position in the global market.

By adopting similar standards, businesses in the Gulf can enhance their credibility and attract international collaborations. It also simplifies entry into European markets, reducing regulatory barriers.

This alignment is not just about compliance. It is about positioning the region as a leader in responsible AI development.

Opportunities for Innovation

While regulation often feels restrictive, it can actually drive innovation. The EU AI Act encourages businesses to develop systems that are not only powerful but also ethical and trustworthy.

For GCC companies, this creates an opportunity to differentiate themselves. By focusing on responsible AI, they can build stronger relationships with customers and partners.

Innovation within a structured framework leads to more sustainable growth. It ensures that technological advancements benefit society while minimizing risks.

Strategic Actions for Businesses

How to Prepare for Compliance

Preparing for the EU AI Act requires a proactive approach. Businesses should start by conducting a thorough assessment of their AI systems to determine their risk categories.

Developing internal governance structures is essential. This includes creating policies, assigning responsibilities, and ensuring proper documentation. Training employees on AI ethics and compliance is equally important.

Organizations should also invest in transparency mechanisms, ensuring that users are informed about AI interactions. Regular audits and updates can help maintain compliance as regulations evolve.

Taking these steps early not only reduces risk but also provides a competitive advantage. Companies that adapt quickly will be better positioned in a rapidly changing regulatory environment.

Conclusion

The EU AI Act represents a significant shift in how artificial intelligence is regulated. It introduces clear rules that prioritize safety, transparency, and accountability while still allowing innovation to thrive.

For businesses in the GCC and around the world, this is both a challenge and an opportunity. Those who ignore the regulation risk facing penalties and losing access to key markets. Those who embrace it can build stronger, more trustworthy systems and gain a competitive edge.

The future of AI is not just about what technology can do. It is about how responsibly it is used. The EU AI Act sets the tone for this future, shaping the way businesses develop and deploy artificial intelligence for years to come.